Tiny AI integrates low-power, small-volume NPU, and MCU adapts to various mainstream 3D sensors in the market. And supports three mainstream 3D sensing technologies such as structured light, ToF, and binocular stereo vision, to meet the needs of voice, image, and so on to identify needs.

Development of Tiny AI:

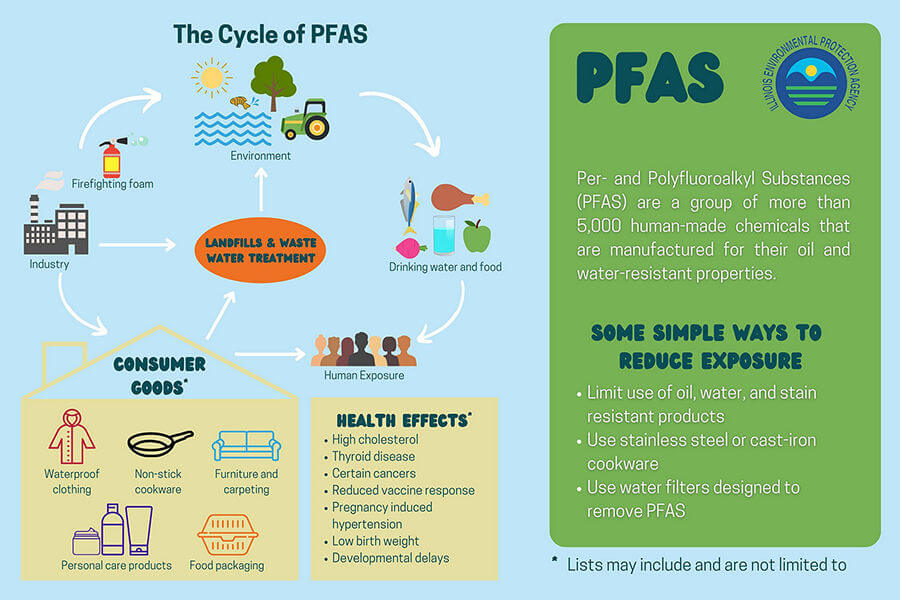

Although artificial intelligence has brought great technological innovation, the problem is: that to build more powerful algorithms, researchers are using more and more data and computing power, and rely on centralized cloud services. Not only would it produce staggering carbon emissions, but it would also limit the speed and privacy of AI applications.

The more complex the model and the larger the number of parameters, the better the inference accuracy can be improved. Therefore, extremely high-performance computing equipment is required to assist in the calculation of training and inference. Therefore, if you want to put AI applications on MCUs with low computing power and little memory, only smaller AI applications or smaller machine learning algorithms, or even ultra-miniature deep learning models are selected for inference. Tiny ML. to shrink existing deep learning models without losing their capabilities. At the same time, a new generation of dedicated AI chips promises to pack more computing power into a more compact physical space and train and run AI with less energy.

What is Tiny AI?

Tiny AI refers to a new model of AI combined with ML that utilizes compression algorithms to minimize the use of large amounts of data and computing power. Tiny AI is a new field in machine learning, to reduce the size of artificial intelligence algorithms, especially those that cater to speech or speech recognition. It also reduces carbon emissions.

What are the Components of Tiny AI or Tiny ML?

- Small data:

The big data that researchers transform through distillation compression in machine learning is called tiny data. The use of tiny data is synonymous with smarter data use, and compressing big data through network pruning is an inherent part of data transformation (from big data to tiny data).

- Data reduction through techniques such as proxy modeling.

- Alternative data sources.

- Unsupervised learning methods.

- Compression strategies such as network pruning.

- AI-assisted data processing.

- Small hardware:

Thanks to advances in technology, tiny AI could help developers produce tiny hardware firewalls and routers. Keep your device safe even when traveling.

- New architecture.

- New structures such as 3D integrated systems.

- New material.

- New packaging solutions.

- Tiny Algorithm:

Tiny Algorithm or Tiny Encryption Algorithm is a block cipher whose strengths lie in simplicity and implementation. Tiny algorithms usually provide the desired results in just a few lines of code.

- New edge learning methods.

- Alternative to ANN architecture.

- Sensor fusion strategies and GPU programming languages.

- Adaptive inference technology.

- Transfer learning method.

Why do You Need Tiny AI?

Training a complex AI model requires a lot of effort because AI adoption spans multiple domains. Efficient and green technology is important. The GPU (Graphics Processing Unit) is the contributor to heat generation. New artificial intelligence models that help with translation, writing, speech, and speech recognition have adverse effects due to carbon dioxide emissions.

To achieve maximum accuracy with the AI model, the developers are responsible for generating approximately 700-1400 pounds of carbon dioxide. Large-scale NLP experiments are wreaking havoc on the environment. BERT is a Transformer-based machine learning model that helps Google process conversational queries that generate approximately 1,400 pounds of carbon dioxide, the largest carbon-emitting AI model to date. Therefore, there is an urgent need for micro-artificial intelligence to reduce carbon emissions that dilute the environment in all possible ways.

What are the Applications of Tiny AI?

- Finance:

Many investment banks are leveraging AI for data collection and predictive analytics. Tiny AI can help financial institutions transform large datasets into smaller ones to simplify the process of predictive analytics.

- Teaching:

Devices based on simple ML algorithms help reduce teachers' workload. VR headsets are also widely used and provide students with a rich experience.

- Manufacturing:

As technology advances, robots will collaborate with humans to ease their workload. Tiny ML can help companies by analyzing sensor data.

- Medical insurance:

Healthcare promises to personalize medicine, driven by our improved ability to collect data and turn it into actionable insights. In genomics, improvements in data usage, algorithms, and hardware lead to faster results. Connected health solutions easily collect medical-grade data for clinical research or continuous monitoring through wearable, implantable, ingestible, or contactless technologies. Use artificial intelligence to personalize treatment for patients.

- Logistics:

Tiny AI has more applications in autonomous and connected cars, such as: To improve safety, the driver's health will be continuously checked by capacitive sensors in the seat and radar systems in the dashboard. Control your in-car entertainment system with a flick of your wrist thanks to gesture recognition technology. Augmenting insights through collaborative sensor fusion is critical for autonomous vehicles that rely on multiple sensors to obtain a complete picture of their surroundings.

Advantages of Tiny AI or Tiny ML:

- Energy efficient:

An AI model emits 284 tons of CO2, five times the life-cycle emissions of the average cost. Tiny AI produces minimal carbon emissions and therefore does not contribute to global warming. Tiny BERT is an energy-efficient model of BERT that is 7.5 times smaller than the original version of BERT. It even outperforms Google's main BERT model by 96%.

- Cost-effectiveness:

The cost of artificial intelligence models is very high. These models cost a lot of money to ensure maximum accuracy. Tiny AI models are cheap compared to big-budget voice assistants.

- Fast:

Compared to traditional AI models, Tiny AI is not only energy efficient and cheap but also faster. Compared to BERT's original model, Tiny BERT is 9.4 times faster overall. Micro-AI is the future of AI. It is energy efficient, cost-friendly, and fast. ML is used in various places. Every application has machine learning happening somewhere. Deep learning can achieve high energy efficiency with simple tiny algorithms. The voice interface has a wake word system to activate the detection task of the voice assistant. In the past, voice assistant systems were developed on large datasets, but the recently developed full-speed recognition system can run natively on Pixel phones, which is a great tool and breakthrough for small ML researchers.